The new Nuke 13.0 release has many new interesting features. It’s quite a long list, so I thought I’d focus on the aspects that I find most interesting (and I’ve kept the full list at end of this post as well).

What I think are the most interesting features:

Artificial Intelligence Research

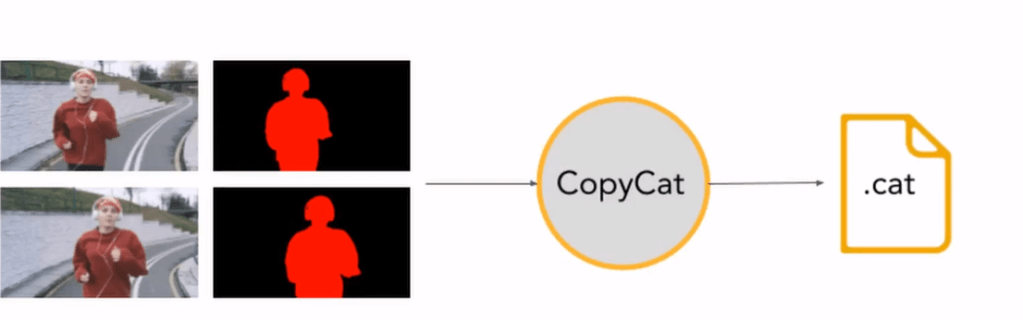

Nuke 13.0 introduces two new nodes the CopyCat and Inference plugin. Together they allow you train a neural network to create your own AI powered effects in NukeX.

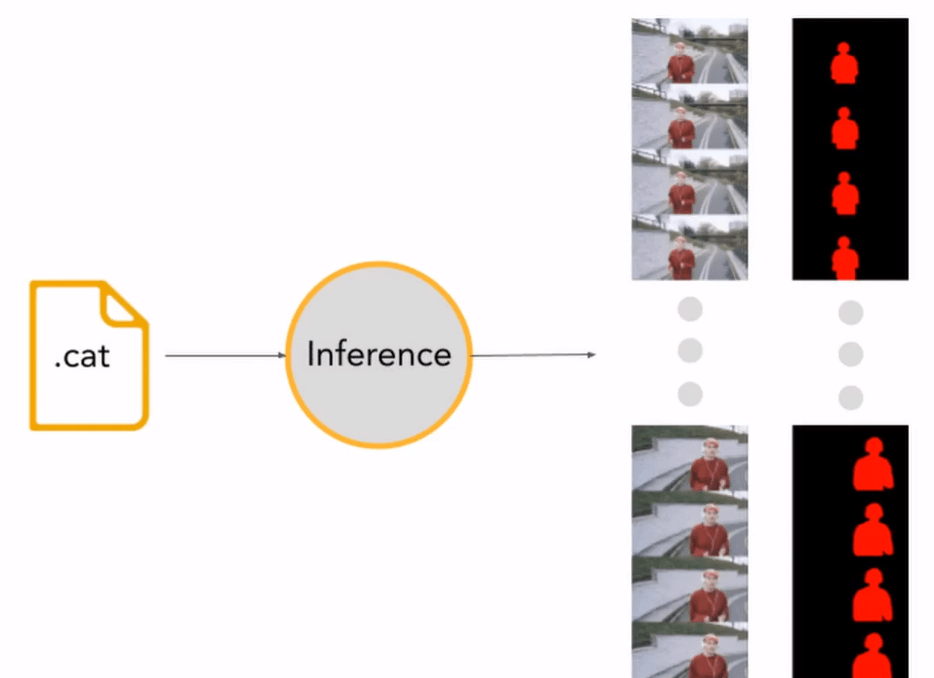

It works through an image-to-image supervised training workflow, where the idea is that you create a few frames using “old-school” compositing methods (e.g. roto a person using the Roto node) and you give the outputs (the matte images) and inputs (the original images) to the CopyCat node, which then tries to learn how to create similar outputs from new inputs. You save the learning result as a separate .cat file (you need NukeX license to train the CopyCat), which you can then import to the Inference plugin (available in every version of Nuke). The Inference plugin can then apply the effect to the rest of your image sequence or even to brand new sequences.

The important thing to remember is that this neural network learns an image-to-image transformation. So, it isn’t going to give you a new Roto nodes with shapes as an output, but the image-to-image principle itself can still be used for many-many applications. For example, you could take clips and add noise to them and then show it to the CopyCat the other way around, telling it that noisy images are input and it should learn to denoise them for output. Or give it a grey shaded CG render and a normals pass, to make your own “normals-from-luma” tool. Or greenscreen footage and despilled images, or defocused images and in focus images, snowy scenes / summer scenes, day-time / night-time, cloudy scy / sunny sky, famous actor with a moustache / famous actor without a moustache…

As people get more and more familiar with training their own neural networks, the Foundry might release a few more pre-trained models. They are already including a Deblur node to remove motion-blur from images and an Upscale node to make images twice as big. You can also expect users to start uploading their own CopyCat experiments to Github and Nukepedia as they get more practice on it.

This is going to be the first feature that I will want to check out, since I’ve trained neural networks during my UG and MSc studies, so watch this space for any examples soon. For the moment, you cannot change the underlying model that the CopyCat uses, but there is talk that in the future you could import your own PyTorch models to it, however, I think the current approach is already an amazing way to get people into deep learning.

3D Space Updates

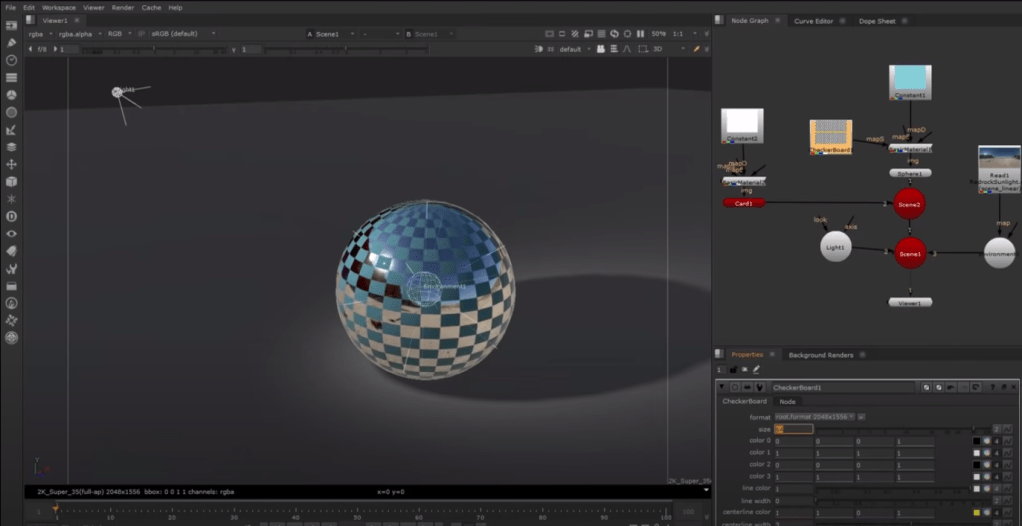

The whole 3D space in Nuke is being reworked with the first stages now adding USD support to the Camera, Axis, Lights and ReadGeo nodes. In addition to that, they are replacing the Nuke’s 3D Viewport with Hydra, which is GPU accelerated and gives you a much more accurate view of materials, reflections and lighting while you’re setting up your 3D scene.

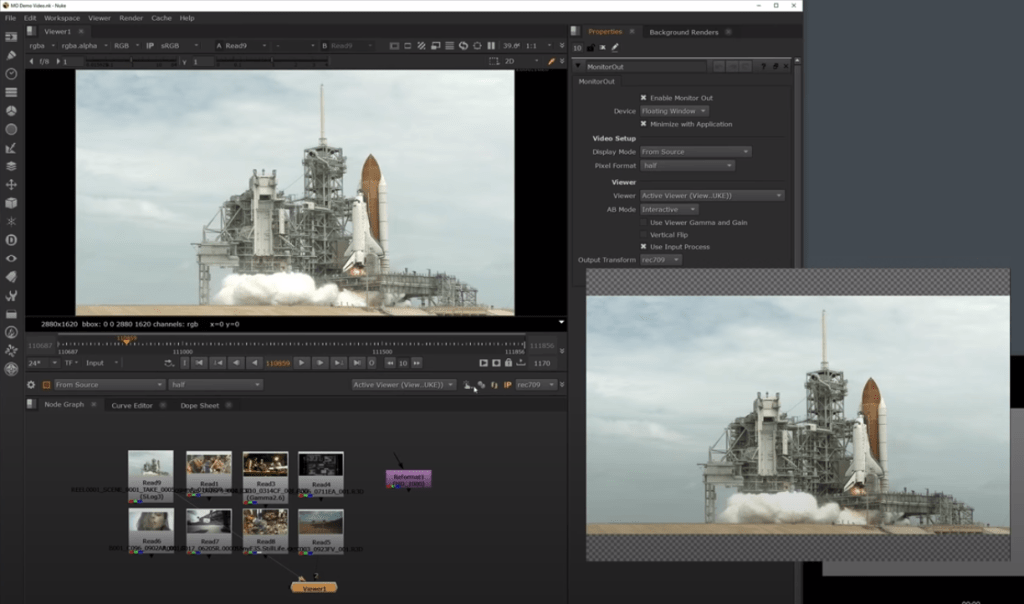

Monitor Output

If you use a second monitor with a video I/O card, then setting it up as a full-screen monitor has been an option in the past as well. What the Foundry has added in this release, is additional options for separate colour transforms between the Viewer in Nuke and the monitor and also the ability to synchronise your Viewer and monitor’s gamma/gain settings by a simple toggle of a button if you do want it to be the same. Most importantly, you can also have a convenient second monitor setup now without buying a separate video I/O, which is good news for anyone running Nuke on their own machines at home.

Full list of features

Hydra

A substantial improvement to the 3D system is the introduction of the Hydra renderer for your viewport previews. You can now see materials, reflections and lights in the preview looking a lot more accurate.

USD Support extended

USD support has now been added to all Nuke’s Camera, ReadGeo, Lights and Axis nodes.

SyncReview out of beta

SyncReview is now out of beta and allows you to full synchronised dailies remotely, while updating the edits and annotating clips.

CopyCat

A neural network that allows you to train your own sequence-specific effects in NukeX.

The idea is that you create a few frames using “old-school” compositing methods (e.g. roto a person using the Roto node) and you give the outputs (the matte images) to the CopyCat node so it could learn to apply that effect to the rest of the image sequence or to brand new video clips.

Upscale and Deblur nodes

These come with the standard Nuke license and use an underlying pretrained neural network to make images exactly twice the size or to remove motion-blur.

Cryptomatte

Now built into Nuke, this tool allows you to do smart selections on your 3D AOVs.

Performance improvements

Nuke should be faster to render and faster to view than previous versions, because it is even better at handling multiple threads than before. When working with images you tend to have a lot of similar operations happening on many pixels at once, so smart software engineering allows your computer to do them simultaneously by managing what is being sent to different cores in your computers processor.

Monitor Output

This work brings exciting new features into Nuke, including independent output transform controls and support for Nuke Studio’s floating window. Artists can also change the resolution across devices, making it easy to work efficiently across two monitors, and can enjoy a smoother experience when moving between the timeline and nodegraph.

VFX Reference Platform

To make it easier to setup pipelines with multiple software packages, it is preferable if they all use similar standards. This is why the Visual Effects Society introduced the VFX Reference Platform to encourage software developers to use similar programming languages and I/O conventions. One of the biggest changes in all of VFX is moving over to Python 3, since Python 2 programming language finished being developed and updated on 1st January this year. This is going to be a challenge for a lot of post-houses because a lot of them have years and years worth of pipeline development that will need to be updated, but that change is coming eventually anyway, so it’s good that Nuke has taken the step to speed up that migration.

Arri Raw and Avid DNxCodec

For high-end film work, support for Arri Raw and Avid DNxCodec has been improved to allow you to work more easily with raw camera files or files that have been converted in an edit house. This is also released as a separate plugin, so that support can be brought to earlier versions of Nuke if you are not updating to 13.0 just yet.